Why are some web apps suddenly handling support, search, and content generation better than entire teams? The answer is not hype-it’s the practical integration of large language models directly into the product experience.

LLMs can turn a standard web app into something far more useful: a system that understands requests, generates responses, summarizes data, and assists users in real time. But adding that capability well requires more than plugging into an API.

You need the right architecture, prompt design, data flow, latency strategy, and safeguards to make the experience reliable and cost-effective. A poorly integrated model feels gimmicky; a well-integrated one becomes a core feature users depend on.

This guide explains how to integrate LLMs into your web app with a focus on real implementation decisions, common pitfalls, and the technical tradeoffs that actually matter. Whether you are building chat, search, automation, or intelligent workflows, the goal is the same: make the model useful, controlled, and production-ready.

What It Takes to Integrate Large Language Models (LLMs) Into a Web App Successfully

What actually makes an LLM feature succeed in a web app? Not the API call. It’s the discipline around boundaries, latency, and failure handling. Teams usually underestimate how much product work sits around the model itself: deciding where free-form generation is allowed, where output must be constrained, and what the app should do when the model is slow, wrong, or unavailable.

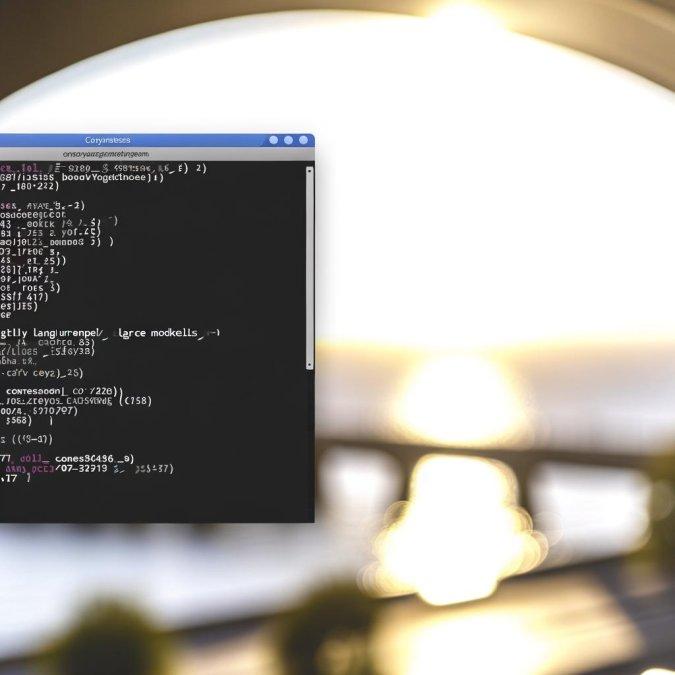

Start with a narrow operational contract. If your support dashboard uses an LLM to draft ticket replies, define inputs, acceptable output format, redaction rules, and fallback behavior before touching prompts. In practice, that often means pairing structured validation with orchestration tools like LangChain or Vercel AI SDK, then logging every prompt, response, token count, and user correction so the feature can be tuned from real usage instead of guesswork.

- Put the model behind a service layer, not directly in frontend code, so keys, retries, rate limits, and provider switching stay under your control.

- Design for partial trust: validate JSON, filter unsafe output, and gate high-risk actions such as sending emails or updating records.

- Measure user-visible quality, not just model quality-time to first token, abandonment rate, edit frequency, and task completion matter more than benchmark scores.

One quick observation from production work: the first version often feels impressive in demos and frustrating in daily use. Why? Because users hit messy edge cases-long histories, vague instructions, copied junk data-that never showed up in staging.

Keep humans in the loop where the cost of error is real. For internal tools, a “review before apply” step saves a lot of cleanup, and for customer-facing workflows, weak observability will hurt you faster than a weak prompt.

How to Add LLM Features to Your Web App: APIs, Prompt Flows, and Backend Architecture

Start with the contract, not the prompt. Define one backend endpoint per user job-summarize ticket, draft reply, extract entities-then map each endpoint to a prompt template, model choice, timeout, and fallback path. In practice, teams that expose a single “ask-llm” route usually regret it once product managers want analytics, rate limits, and different retention rules.

Keep the browser out of direct model access. Route requests through your server so you can inject system instructions, redact secrets, attach tenant context, and log token usage before calling OpenAI, Anthropic, or a gateway like OpenRouter. Small thing, but it matters.

- Use a prompt flow object, not raw strings: system message, task instructions, user payload, retrieval context, output schema.

- Validate outputs server-side with JSON schema or tools like Zod; retry only on parse failure, not on every “bad” answer.

- Queue slow jobs with BullMQ or Celery when latency can exceed your page budget.

A common pattern is synchronous preview plus asynchronous finalization. For example, a support dashboard can stream a first draft reply in 2-4 seconds, then run a second backend pass that checks policy language, account status, and refund rules before the agent sends it. That split lowers perceived latency without handing the model the final authority.

One quick observation: retrieval pipelines fail more often from messy source data than from weak prompts. If your app pulls context from Notion, PDFs, and stale CRM notes, add a preprocessing layer that chunks, deduplicates, and stamps documents with freshness metadata before embedding them.

On the architecture side, separate orchestration from business logic. Let one service assemble context, call the model, and normalize outputs; let another service handle permissions, billing, and audit trails. When costs spike, the fix is often boring-better caching, tighter context windows, and fewer second-pass calls-not a model switch.

Common LLM Integration Mistakes in Web Apps and How to Improve Cost, Speed, and Accuracy

Most web apps do not fail at the model layer; they fail in the glue code around it. Teams often send entire chat histories, raw documents, and verbose system prompts on every request, then wonder why latency spikes and bills drift upward. A better pattern is to separate stable instructions from per-request context, summarize long threads every few turns, and cache repeatable outputs with tools like Redis or edge caches in Cloudflare.

Another expensive mistake: using the biggest model for every task. Don’t. Route work by difficulty-classification, extraction, moderation, and query rewriting usually belong on smaller, cheaper models, while only ambiguous or high-stakes requests should escalate to a stronger model. In one support workflow I’ve seen, intent detection ran on a lightweight model, and only refund disputes with policy edge cases hit the premium tier; response time dropped noticeably without hurting quality.

- Validate inputs before the LLM call. Strip boilerplate, deduplicate pasted text, and cap token budgets at the API gateway.

- Demand structured output. JSON schema validation and retry-on-invalid-response in OpenAI, Anthropic, or orchestration layers like LangChain prevent fragile parsing.

- Log prompts, model version, latency, token counts, and user feedback in one trace using Langfuse or Helicone; otherwise you are debugging blind.

A quick observation from production: retrieval quality usually matters more than prompt cleverness. Teams spend hours polishing prompt wording while an outdated chunking strategy feeds the model irrelevant text from six versions ago. Then everyone blames the model.

Accuracy also drops when developers skip fallback design. If retrieval confidence is low, ask a clarifying question, return a constrained answer, or hand off to search instead of forcing a hallucinated response. The cheapest token is the one you never send, and the fastest answer is often the one your app chooses not to generate.

Summary of Recommendations

Integrating LLMs into a web app is ultimately a product decision, not just a technical one. The strongest implementations start with a narrowly defined use case, clear evaluation criteria, and safeguards for privacy, latency, and cost before scaling to broader features.

In practice, choose an approach that matches your constraints: use hosted APIs for speed, fine-tuning or retrieval for domain accuracy, and strong monitoring to catch quality drift over time. If an LLM cannot improve a user task in a measurable way, it should not be in the stack. Build for reliability first, then optimize for sophistication.

Dr. Julian Vane is a distinguished software engineer and consultant with a doctorate in Computational Theory. A specialist in rapid prototyping and modular architecture, he dedicated his career to optimizing how businesses handle transitional technology. At TMP, Julian leverages his expertise to deliver high-impact, temporary coding solutions that ensure stability and performance during critical growth phases.